注册

登录

注册

登录

注册

登录

注册

登录

这是一个日志收集系统,日志收集属于可观测性体系

可观测性体系

监控

日志

链路追踪

ELK现在用的少,原因是

- jruby(java+ruby)

- 语法复杂:重量级日志采集

- 性能差

EFK

PLG

我们这里部署的架构是

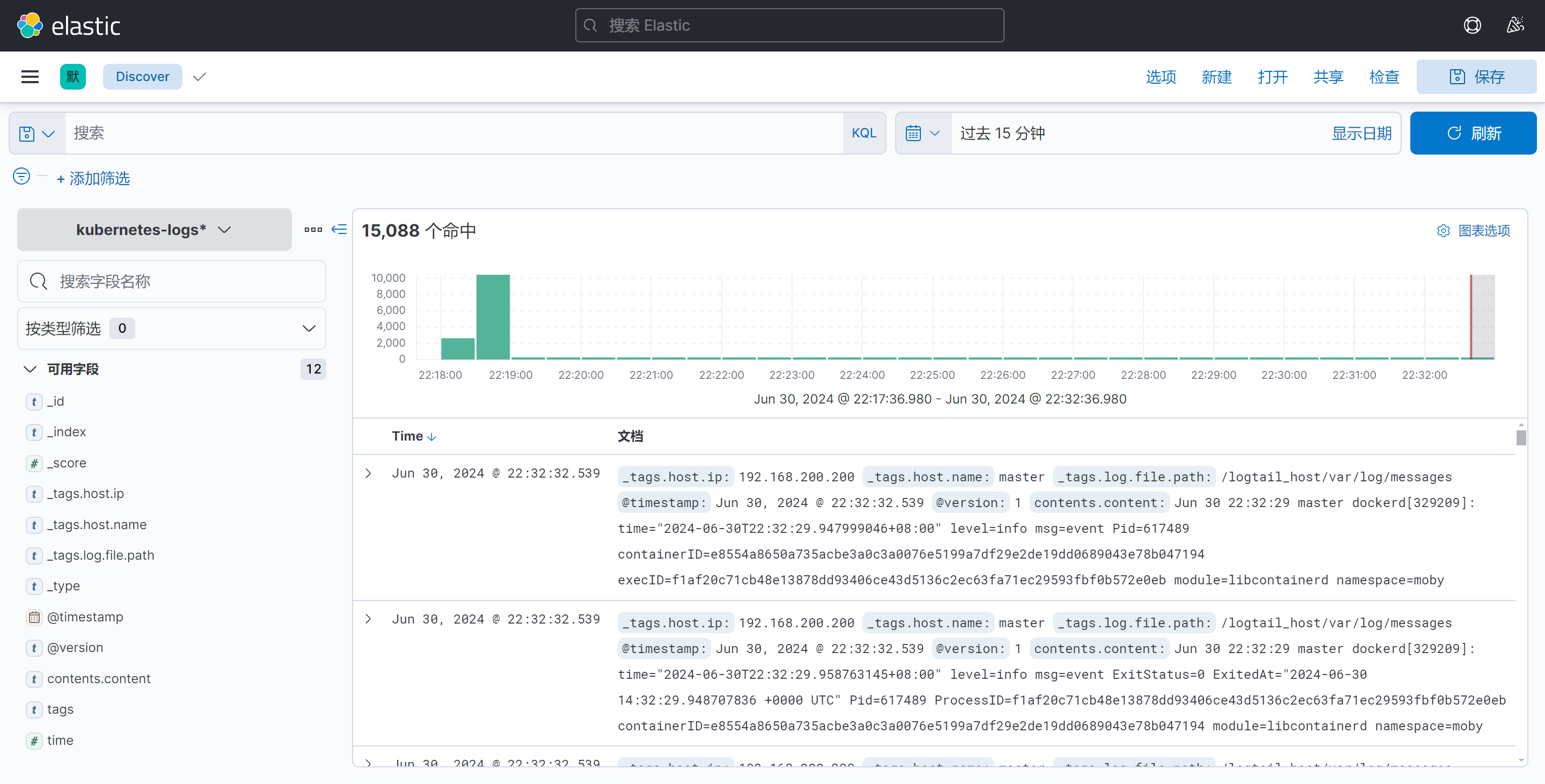

ilogtail ---> kafka ---> logstash ---> elasticsearch ---> kibana

使用ilogtail采集日志写入到kafka消息队列里,再由logstash从消息队列里读取日志写入到 es,最后再由kibana做展示

至于第三个环节为什么是logstash而不是ilogtail是因为,ilogtail要往es里面写日志会需要配置es的认证密码,但我们是没有给es配置用户名和密码的,所以采用logstash

[root@master EFK]# vim es-svc.yaml

kind: Service

apiVersion: v1

metadata:

name: elasticsearch

namespace: logging

labels:

app: elasticsearch

spec:

selector:

app: elasticsearch

clusterIP: None

ports:

- port: 9200

name: rest

- port: 9300

name: inter-node

[root@master EFK]# vim es-sts.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: es

namespace: logging

spec:

serviceName: elasticsearch

replicas: 1

selector:

matchLabels:

app: elasticsearch

template:

metadata:

labels:

app: elasticsearch

spec:

initContainers:

- name: initc1

image: busybox

command: ["sysctl","-w","vm.max_map_count=262144"]

securityContext:

privileged: true

- name: initc2

image: busybox

command: ["sh","-c","ulimit -n 65536"]

securityContext:

privileged: true

- name: initc3

image: busybox

command: ["sh","-c","chmod 777 /data"]

volumeMounts:

- name: data

mountPath: /data

containers:

- name: elasticsearch

image: swr.cn-east-3.myhuaweicloud.com/hcie_openeuler/elasticsearch:7.17.1

resources:

limits:

cpu: 1000m

requests:

cpu: 100m

ports:

- containerPort: 9200

name: rest

protocol: TCP

- containerPort: 9300

name: inter-node

protocol: TCP

volumeMounts:

- name: data

mountPath: /usr/share/elasticsearch/data

env:

- name: cluster.name

value: k8s-logs

- name: node.name

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: cluster.initial_master_nodes

value: "es-0"

- name: discovery.zen.minimum_master_nodes

value: "2"

- name: discovery.seed_hosts

value: "elasticsearch"

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx512m"

- name: network.host

value: "0.0.0.0"

volumeClaimTemplates:

- metadata:

name: data

labels:

app: elasticsearch

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 10Gi

应用yaml文件

[root@master EFK]# kubectl create ns logging

[root@master EFK]# kubectl apply -f .

service/elasticsearch create

statefulset.apps/es create

[root@master EFK]# kubectl get pods -n logging

NAME READY STATUS RESTARTS AGE

es-0 1/1 Running 0 46s

pod显示running就是部署好了

我直接将所有需要的资源放在一个yaml文件里面

apiVersion: v1

kind: ConfigMap

metadata:

namespace: logging

name: kibana-config

labels:

app: kibana

data:

kibana.yml: |

server.name: kibana

server.host: "0.0.0.0"

i18n.locale: zh-CN

elasticsearch:

hosts: ${ELASTICSEARCH_HOSTS}

---

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: logging

labels:

app: kibana

spec:

ports:

- port: 5601

type: NodePort

selector:

app: kibana

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: logging

labels:

app: kibana

spec:

selector:

matchLabels:

app: kibana

template:

metadata:

labels:

app: kibana

spec:

containers:

- name: kibana

image: swr.cn-east-3.myhuaweicloud.com/hcie_openeuler/kibana:7.17.1

imagePullPolicy: IfNotPresent

resources:

limits:

cpu: 1

requests:

cpu: 1

env:

- name: ELASTICSEARCH_URL

value: http://elasticsearch:9200 # 写handless的名字

- name: ELASTICSEARCH_HOSTS

value: http://elasticsearch:9200 # 写handless的名字

ports:

- containerPort: 5601

volumeMounts:

- name: config

mountPath: /usr/share/kibana/config/kibana.yml

readOnly: true

subPath: kibana.yml

volumes:

- name: config

configMap:

name: kibana-config

查看端口并访问

[root@master EFK]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticsearch ClusterIP None <none> 9200/TCP,9300/TCP 17m

kibana NodePort 10.104.94.122 <none> 5601:30980/TCP 4m30s

kibana的nodeport端口是30980,我们来访问

这样就算部署好了,接下来需要部署日志采集工具

因为Fluentd配置复杂,所以我这里采用ilogtail来采集日志

我们先使用docker-compose的方式部署,最后整个平台搭建起来之后我们再将ilogtail部署到k8s集群里

[root@master ilogtail]# vim docker-compose.yaml

version: "3"

services:

ilogtail:

container_name: ilogtail

image: sls-opensource-registry.cn-shanghai.cr.aliyuncs.com/ilogtail-community-edition/ilogtail:2.0.4

network_mode: host

volumes:

- /:/logtail_host:ro

- /var/run:/var/run

- ./checkpoing:/usr/local/ilogtail/checkpoint

- ./config:/usr/local/ilogtail/config/local

启动容器

[root@master ilogtail]# docker-compose up -d

[root@master ilogtail]# docker ps |grep ilogtail

eac545d4da87 sls-opensource-registry.cn-shanghai.cr.aliyuncs.com/ilogtail-community-edition/ilogtail:2.0.4 "/usr/local/ilogtail…" 10 seconds ago Up 9 seconds ilogtail

这样容器就启动了

[root@master ilogtail]# cd config/

[root@master config]# vim sample-stdout.yaml

enable: true

inputs:

- Type: input_file # 文件输入类型

FilePaths:

- /logtail_host/var/log/messages

flushers:

- Type: flusher_stdout # 标准输出流输出类型

OnlyStdout: true

[root@master config]# docker restart ilogtail

/logtail_host/var/log/messages:这里是这个地址的原因是我们将宿主机的/,挂载到了容器内的logtail_host,所以我们宿主机产生的日志会在容器的/logtail_host/var/log/messages这个目录下

配置文件写好之后我们还需要重启容器让他读取配置,所以有一个restart

[root@master config]# docker logs ilogtail

2024-06-30 11:16:25 {"content":"Jun 30 19:16:22 master dockerd[1467]: time=\"2024-06-30T19:16:22.251108165+08:00\" level=info msg=\"handled exit event processID=9a8df40981b3609897794e50aeb2bde805eab8a75334266d7b5c2899f61d486e containerID=61770e8f88e3c6a63e88f2a09d2683c6ccce1e13f6d4a5b6f79cc4d49094bab4 pid=125402\" module=libcontainerd namespace=moby","__time__":"1719746182"}

2024-06-30 11:16:25 {"content":"Jun 30 19:16:23 master kubelet[1468]: E0630 19:16:23.594557 1468 kubelet_volumes.go:245] \"There were many similar errors. Turn up verbosity to see them.\" err=\"orphaned pod \\\"9d5ae64f-1341-4c15-b70f-1c8f71efc20e\\\" found, but error not a directory occurred when trying to remove the volumes dir\" numErrs=2","__time__":"1719746184"}

可以看到,宿主机的日志已经被成功采集了,宿主机的日志会被封装到content里,如果没有看到输出的日志的话,需要进入到容器内部查看一个叫做ilogtail.LOG的文件,而不能使用docker logs ilogtail

[root@master config]# cp sample-stdout.yaml docker-stdout.yaml

# 为了避免同时输出到标准输出而导致的日志杂乱,我们临时将sample-stdout关掉

[root@master config]# cat sample-stdout.yaml

enable: false # 将这里改为false

inputs:

- Type: input_file # 文件输入类型

FilePaths:

- /logtail_host/var/log/messages

flushers:

- Type: flusher_stdout # 标准输出流输出类型

OnlyStdout: true

[root@master config]# cat docker-stdout.yaml

enable: true

inputs:

- Type: service_docker_stdout

Stdout: true # 采集标准输出

Stderr: false # 不采集错误输出

flushers:

- Type: flusher_stdout

OnlyStdout: true

[root@master config]# docker restart ilogtail

ilogtail

2024-06-30 11:24:13 {"content":"2024-06-30 11:24:10 {\"content\":\"2024-06-30 11:24:07 {\\\"content\\\":\\\"2024-06-30 11:24:04.965 [INFO][66] felix/summary.go 100: Summarising 12 dataplane reconciliation loops over 1m3.4s: avg=3ms longest=12ms ()\\\",\\\"_time_\\\":\\\"2024-06-30T11:24:04.965893702Z\\\",\\\"_source_\\\":\\\"stdout\\\",\\\"_container_ip_\\\":\\\"192.168.200.200\\\",\\\"_image_name_\\\":\\\"calico/node:v3.23.5\\\",\\\"_container_name_\\\":\\\"calico-node\\\",\\\"_pod_name_\\\":\\\"calico-node-hgqzr\\\",\\\"_namespace_\\\":\\\"kube-system\\\",\\\"_pod_uid_\\\":\\\"4d0d950c-346a-4f81-817c-c19526700542\\\",\\\"__time__\\\":\\\"1719746645\\\"}\",\"_time_\":\"2024-06-30T11:24:07.968118197Z\",\"_source_\":\"stdout\",\"_container_ip_\":\"192.168.200.200\",\"_image_name_\":\"sls-opensource-registry.cn-shanghai.cr.aliyuncs.com/ilogtail-community-edition/ilogtail:2.0.4\",\"_container_name_\":\"ilogtail\",\"__time__\":\"1719746647\"}","_time_":"2024-06-30T11:24:10.971474647Z","_source_":"stdout","_container_ip_":"192.168.200.200","_image_name_":"sls-opensource-registry.cn-shanghai.cr.aliyuncs.com/ilogtail-community-edition/ilogtail:2.0.4","_container_name_":"ilogtail","__time__":"1719746650"}

能够正常看见日志就说明日志采集没有问题,接下来我们部署kafka,用来接收ilogtail的日志,注意将日志采集关掉,不然你的虚拟机磁盘很快就会满

kafka作为消息队列,会有消费者和生产者,生产者在这里就是ilogtail,也就是将日志写入到kafka,消费者就是logstash,从kafka里面读取日志写入到es

Apache kafka是分布式的,基于发布/订阅的容错消息系统,主要特性如下

高吞吐,低延迟:可以做到每秒百万级的吞吐量,并且延迟低(其他的消息队列基本也都可以)

持久性,可靠性:消息会被持久化到本地磁盘,支持数据备份防止数据丢失,并且可以配置消息有效期,以便消费者可以多次消费

kafka官方不支持docker部署,我们可以使用第三方的镜像

version: '3'

services:

zookeeper:

image: quay.io/3330878296/zookeeper:3.8

network_mode: host

container_name: zookeeper-test

volumes:

- zookeeper_vol:/data

- zookeeper_vol:/datalog

- zookeeper_vol:/logs

kafka:

image: quay.io/3330878296/kafka:2.13-2.8.1

network_mode: host

container_name: kafka

environment:

KAFKA_ADVERTISED_HOST_NAME: "192.168.200.200"

KAFKA_ZOOKEEPER_CONNECT: "192.168.200.200:2181"

KAFKA_LOG_DIRS: "/kafka/logs"

volumes:

- kafka_vol:/kafka

depends_on:

- zookeeper

volumes:

zookeeper_vol: {}

kafka_vol: {}

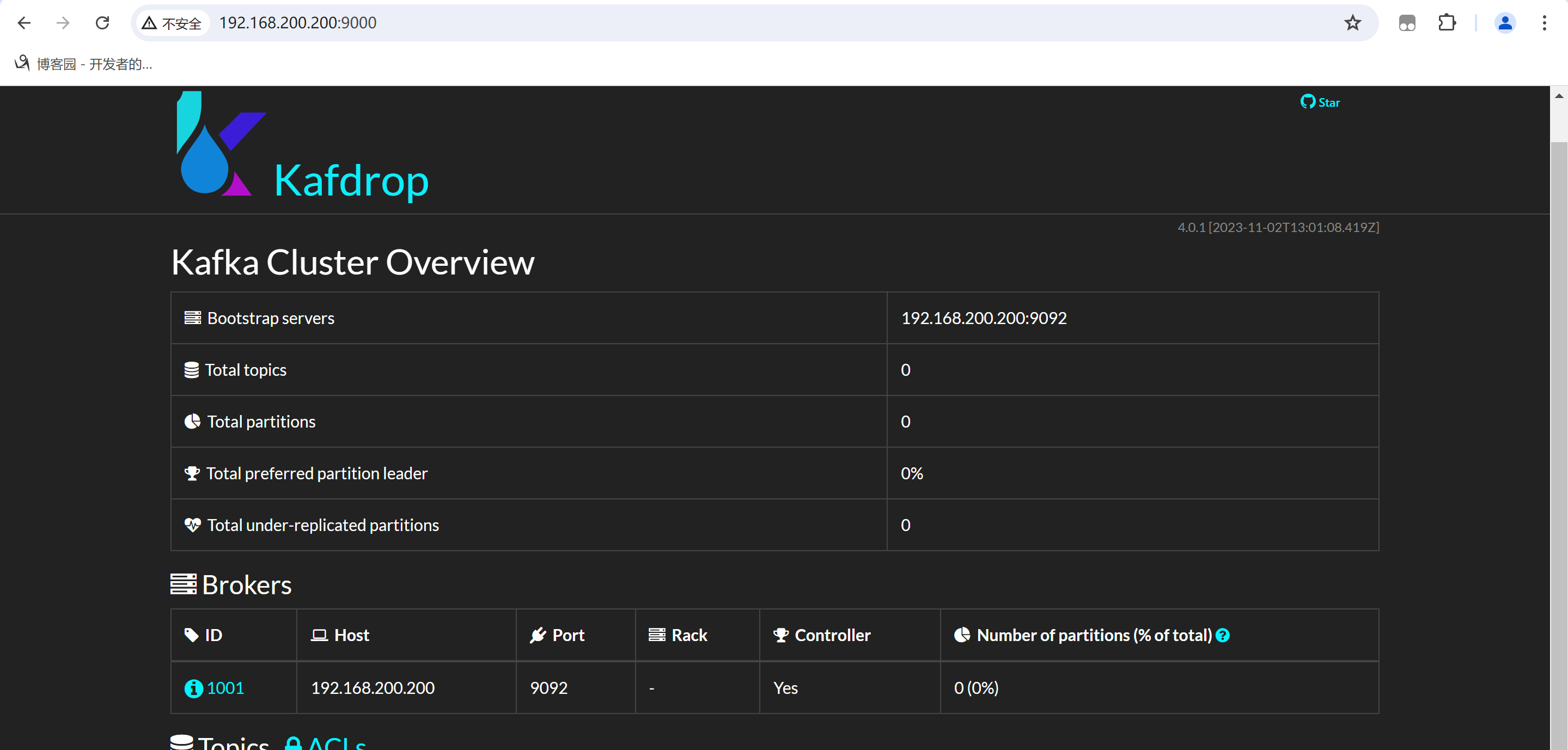

[root@master kafka]# docker run -d --rm -p 9000:9000 \

-e KAFKA_BROKERCONNECT=192.168.200.200:9092 \

-e SERVER_SERVLET_CONTEXTPATH="/" \

quay.io/3330878296/kafdrop

部署好之后就可以使用web界面查看了,部署web界面的原因是我们将日志写入到kafka之后可以直接使用web界面查看也没有写入进去,比kafka命令行更加的直观

在浏览器输入ip:9000

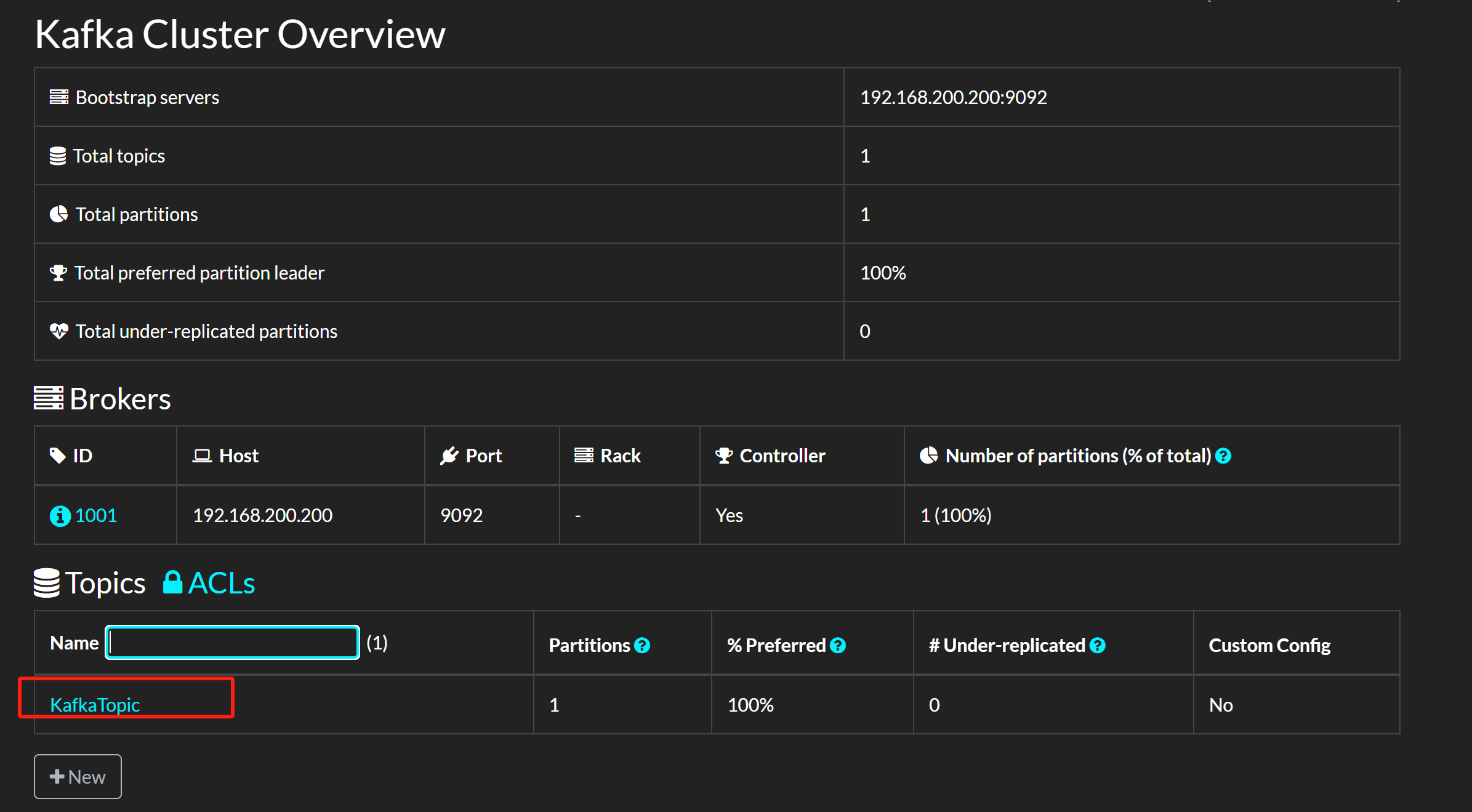

[root@master config]# cd /root/ilogtail/config

[root@master config]# cp sample-stdout.yaml kafka.yaml

[root@master config]# vim kafka.yaml

enable: true

inputs:

- Type: input_file

FilePaths:

- /logtail_host/var/log/messages

flushers:

- Type: flusher_kafka_v2

Brokers:

- 192.168.200.200:9092

Topic: KafkaTopic

[root@master config]# docker restart ilogtail

ilogtail

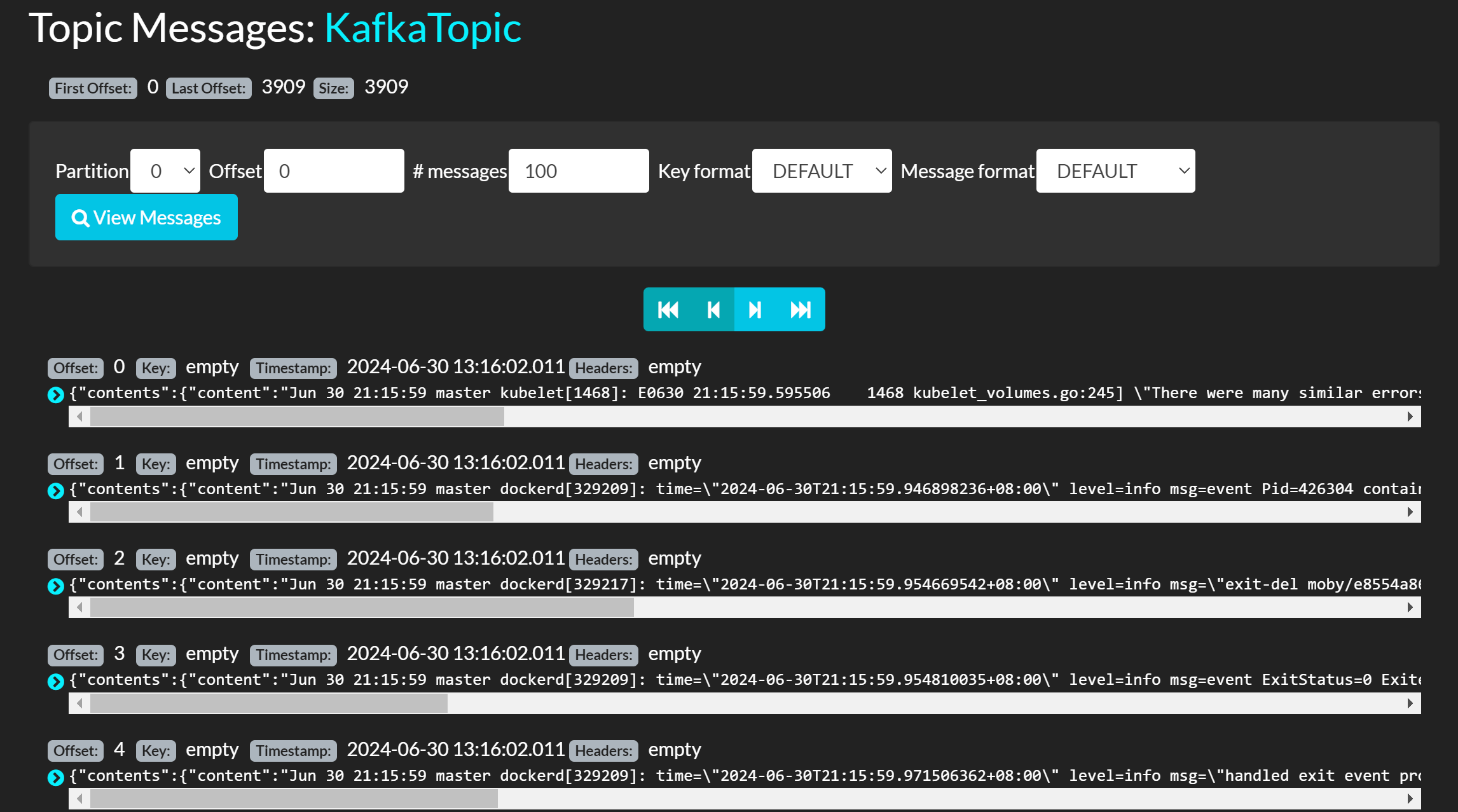

这个时候我们再回到web界面就会出现一个topic

点进去可以查看有哪些日志被写入进去了

能看见日志就没问题了,接下来部署logstash

logstash会从kafka读取消息然后写入到es里面去

[root@master ~]# mkdir logstash

[root@master ~]# cd logstash

[root@master logstash]# vim docker-compose.yaml

version: '3'

services:

logstash:

image: quay.io/3330878296/logstash:8.10.1

container_name: logstash

network_mode: host

environment:

LS_JAVA_OPTS: "-Xmx1g -Xms1g"

volumes:

- /etc/localtime:/etc/localtime:ro

- /apps/logstash/config/logstash.yml:/usr/share/logstash/config/logstash.yml

- /apps/logstash/pipeline:/usr/share/logstash/pipeline

- /var/log:/var/log

docker-compose写好之后先不要着急启动,因为我们给他挂载的配置文件还没有启动

现在编写配置文件

[root@master logstash]# mkdir /apps/logstash/{config,pipeline}

[root@master logstash]# cd /apps/logstash/config/

[root@master config]# vim logstash.yml

pipeline.workers: 2

pipeline.batch.size: 10

pipeline.batch.delay: 5

config.reload.automatic: true

config.reload.interval: 60s

写好这个文件之后我们启动这个logstash容器

[root@master logstash]# /root/logstash

[root@master logstash]# docker-compose up -d

[root@master logstash]# docker ps |grep logstash

60dfde4df40d quay.io/3330878296/logstash:8.10.1 "/usr/local/bin/dock…" 2 minutes ago Up 2 minutes logstash

启动之后就没问题了

我们要使用logstash输出日志到es的话就需要到pipeline里面去写一些规则

[root@master EFK]# cd /apps/logstash/pipeline/

[root@master pipeline]# vim logstash.conf

input {

kafka {

# 指定kafka地址

bootstrap_servers => "192.168.200.200:9092"

# 从哪些topic获取数据,要写已经存在topic

topics => ["KafkaTopic"]

# 从哪个地方开始读取,earliest是从头开始读取

auto_offset_reset => "earliest"

codec => "json"

# 当一个logstash中有多个input插件时,建议每个插件定义一个id

# id => "kubernetes"

# group_id => "kubernetes"

}

}

filter {

json {

source => "event.original"

}

mutate {

remove_field => ["event.original","event"]

}

}

output {

elasticsearch {

hosts => ["http://192.168.200.200:9200"]

index => "kubernetes-logs-%{+YYYY.mm}"

}

}

[root@master EFK]# kubectl expose pod es-0 --type NodePort --port 9200 --target-port 9200

service/es-0 exposed

[root@master EFK]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticsearch ClusterIP None <none> 9200/TCP,9300/TCP 3h38m

es-0 NodePort 10.97.238.173 <none> 9200:32615/TCP 2s

kibana NodePort 10.106.1.52 <none> 5601:30396/TCP 3h38m

这里他将9200映射到了本地的32615端口,所以我们将logstash的地址改到32615

output {

elasticsearch {

hosts => ["http://192.168.200.200:32615"]

index => "kubernetes-logs-%{+YYYY.mm}"

}

}

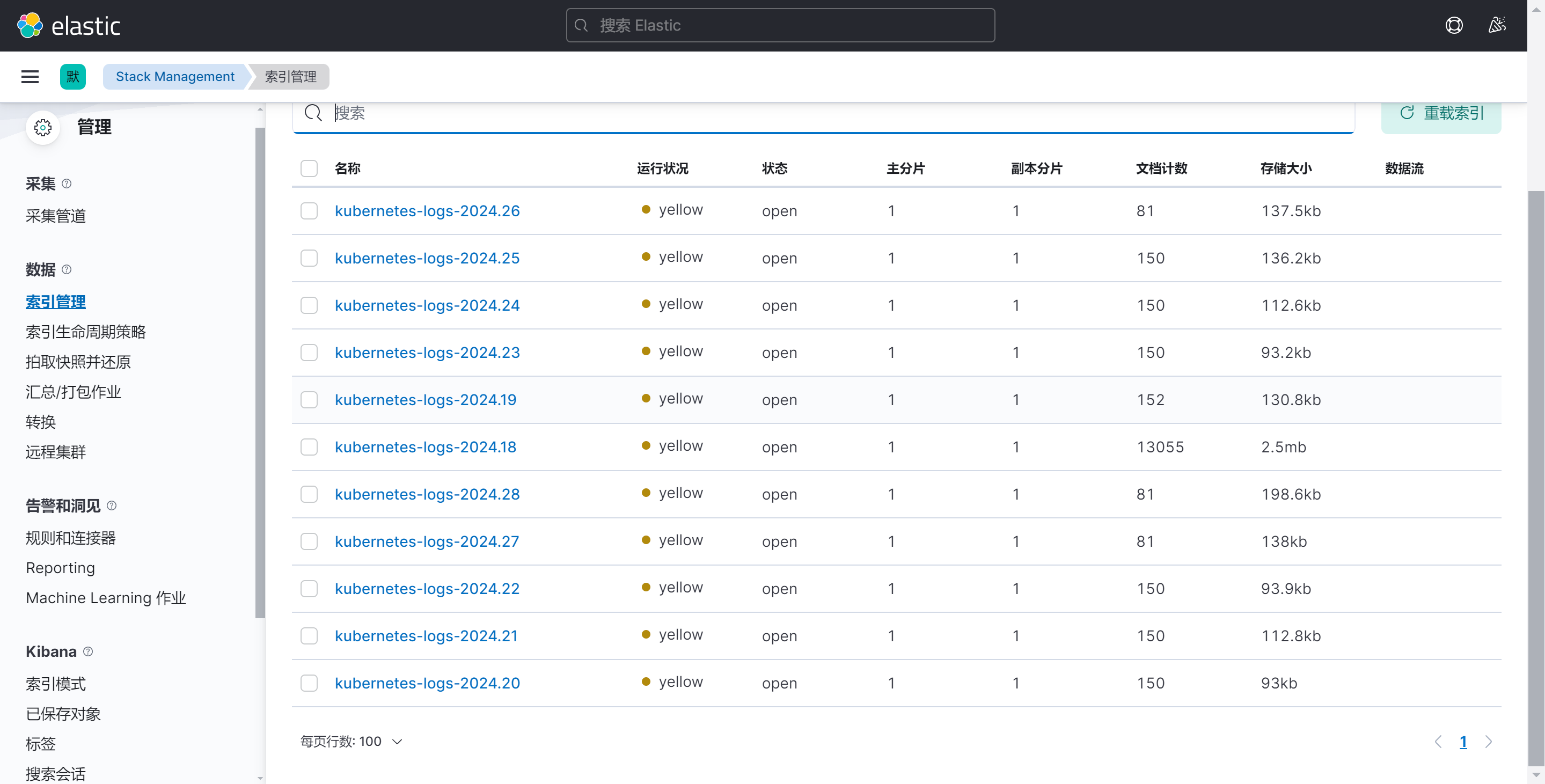

然后重启logstash

[root@master pipeline]# docker restart logstash

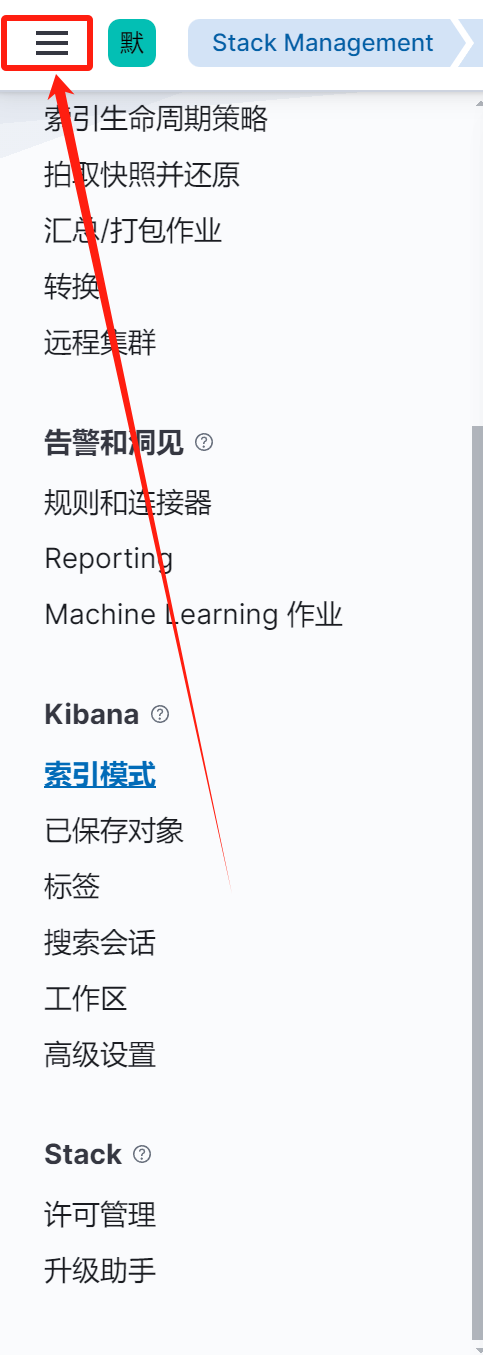

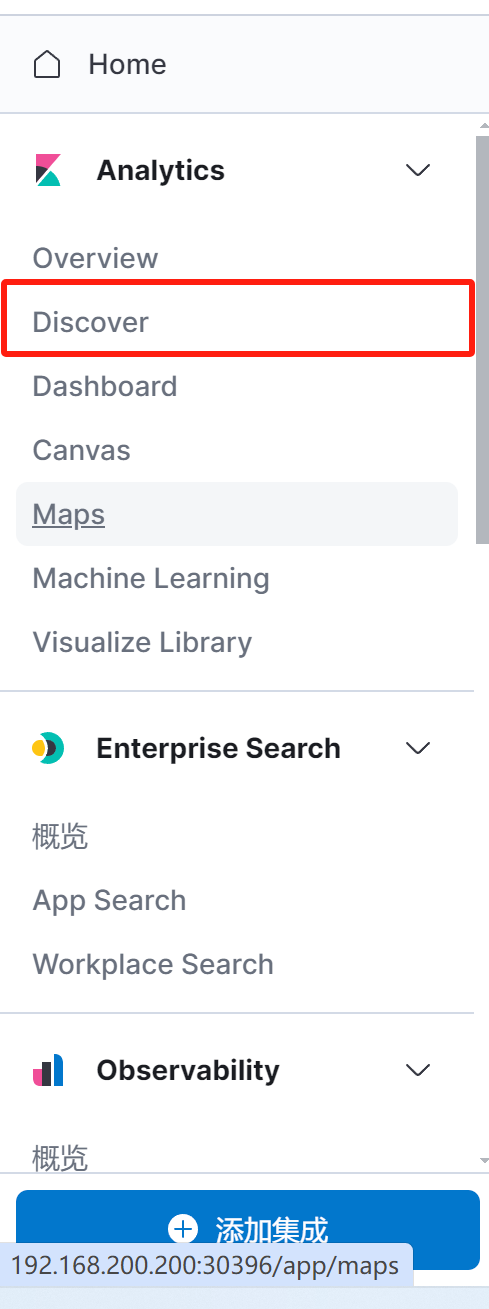

点击stack management

点击索引管理,会看到有索引存在就是正常

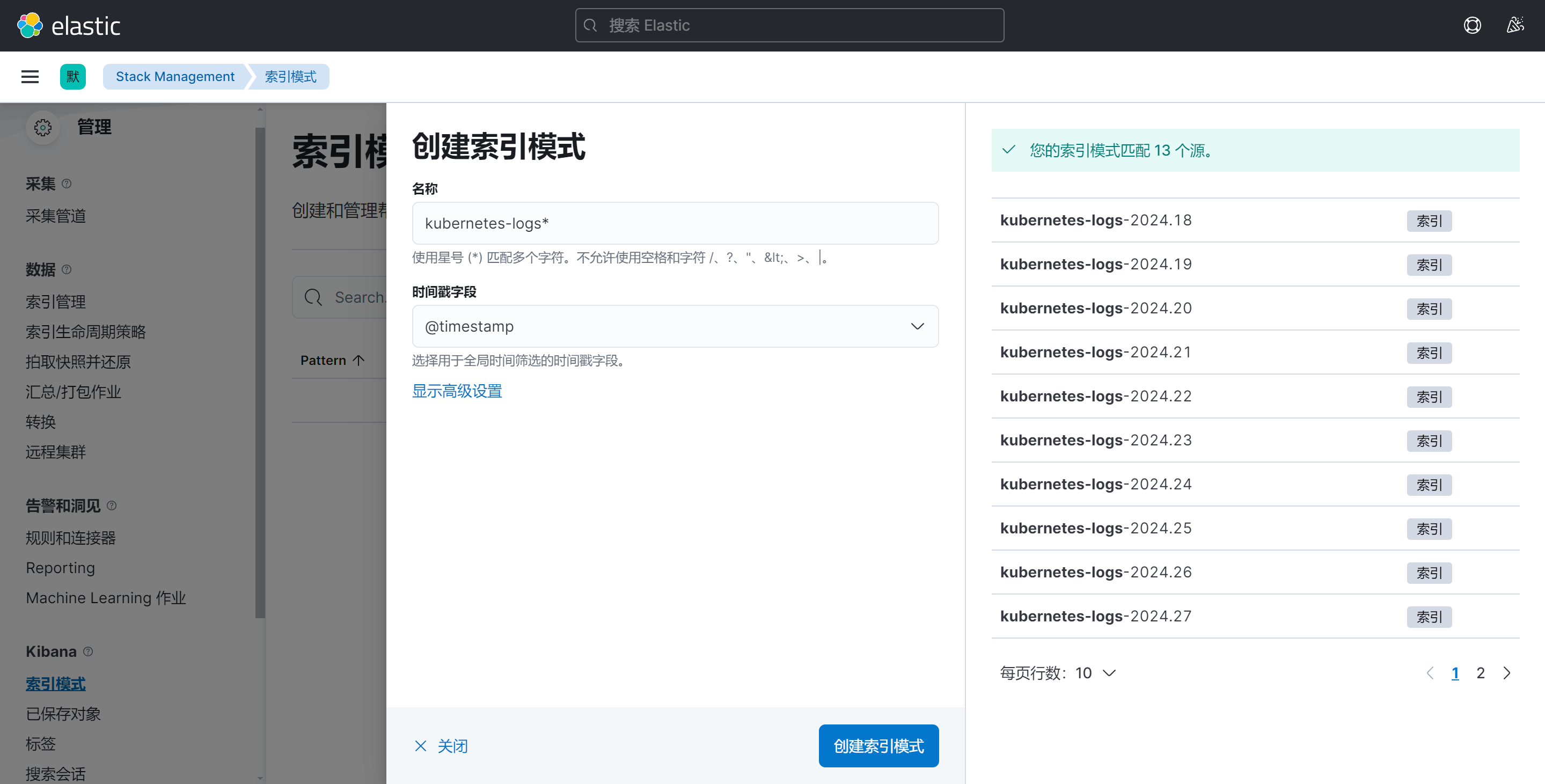

点击索引模式,创建索引